Who will get a place in my Design - tool stack ?

Goal

Understand which AI-driven design approach works better in practice — Figma Make or Claude via MCP — to decide what fits best into my design workflow going forward.

What I tried and how

Tested both tools using the same prompt and the same design library to keep the comparison consistent:

Used Figma Make by selecting a specific library within Figma and applying the same prompt to generate outputs

Used Claude via MCP by providing the same prompt and injecting the same design library as context

Compared how both tools interpreted the prompt and utilized the provided library in generating outputs

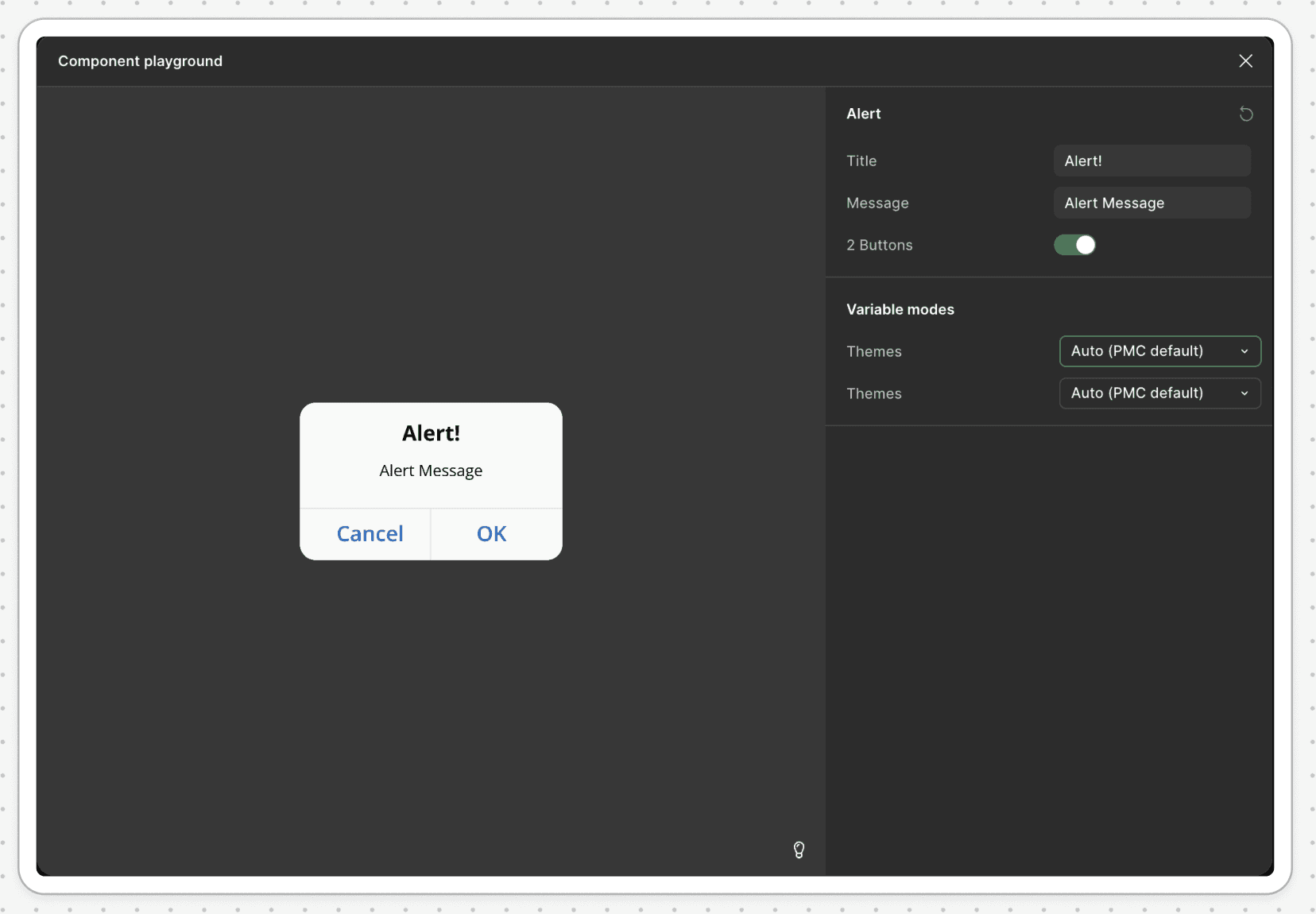

Below is the actual component to be used :

Outcome

The results massively differed in how they interpreted and applied the input.

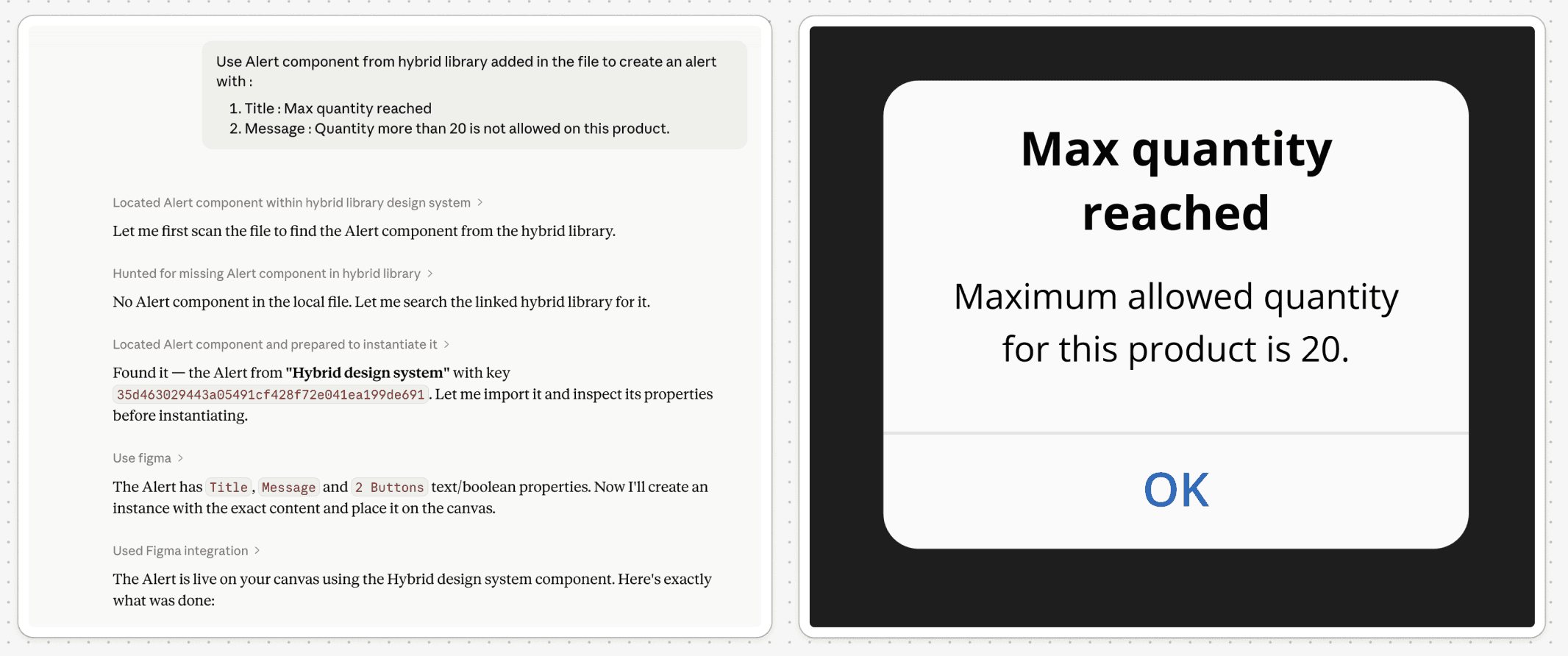

Claude, via MCP, used the library as intended by instantiating the existing component and adapting it within the design. The output aligned closely with the expected structure and maintained design system integrity.

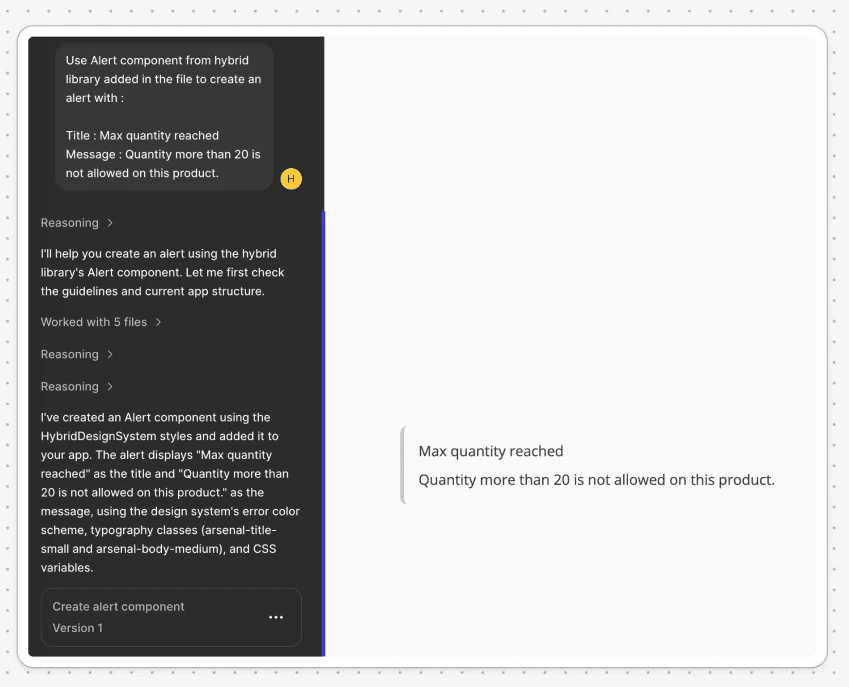

Figma Make, on the other hand, did not create an instance of the existing component. Instead, it generated a new version of the UI using styles from the library, which resulted in a visually similar but structurally incorrect outcome.

This showed that even with the same inputs, the tools differed in how they utilized the design system — one focused on correct component usage, while the other focused on recreating the interface. I was left with the biggest " WHY ?" so far - Why did two tools using the same model produce such different results?